Last year I got to do a Precon on Power BI for the Pittsburgh SQL Saturday. This year I’m honored to be presenting at Cleveland and Cincinnati. This time I thought it would make sense to have a blog post summarizing what’s covered.

One of the things that I found frustrating when I first learned about Power BI, was all of the behind the scenes stuff. It was easy to find information about charts and graphs, but less so about how everything fits together. This precon focuses on two main areas: data wrangling, and administration.

Session 1: Database Theory

Because Power BI is aimed at business users in large part, there are many people using it who don’t have a traditional data background. This means it’s worth touching on some of the fundamentals such as primary keys, normalization and star schema.

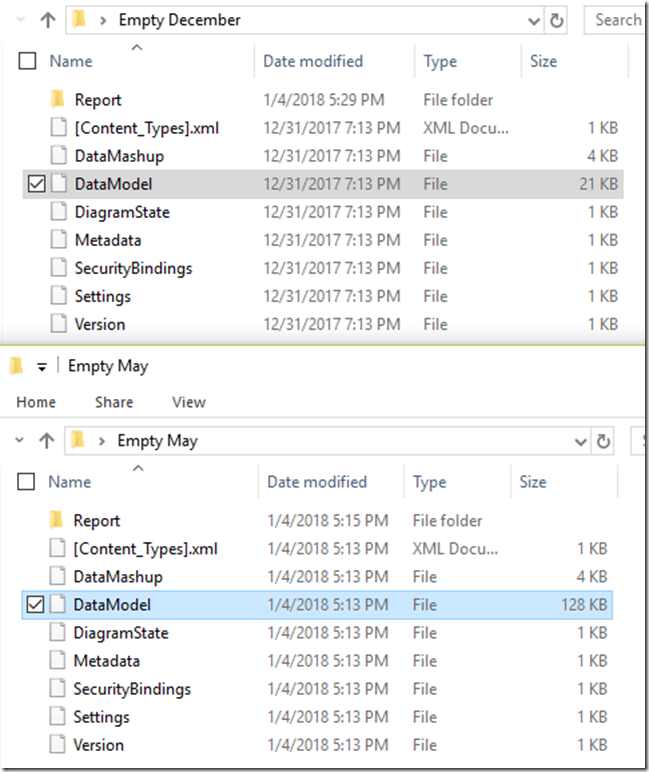

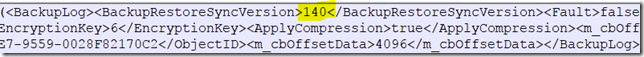

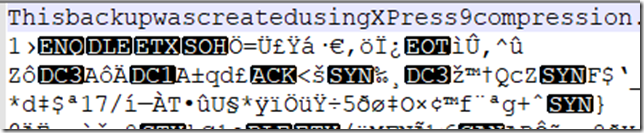

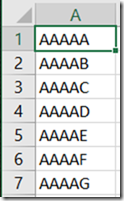

The most important things to understand when modeling for Power BI, is that it’s optimized for star schema in particular and filtering/aggregating in general. That fact that it’s a columnar database means it can handle a certain amount of flattening/denormalizing gracefully, because it has really good compression.

Session 2: Power Query

One of the things that can be confusing is that it has 2 different data manipulation languages, M and DAX. (3 languages if you could R!). So a question that comes up a lot is when to use which language.

Power Query is designed for business users primarily, especially since it started as an Excel add-in. In fact the official Microsoft litmus test is that is was designed for users who get value from the excel formula bar in their work. As a result, it has a strong GUI component, but is really basic in a lot of ways.

The way I like to think of it is “Anything you could pay someone minimum wage to do in Excel, you can automate in Power Query.” Power query is all about basic clean up and data prep. You aren’t going to be adding a lot of meaning to the data.

Session 3: DAX

DAX is the language you are going to use to model your data and add meaning to it. DAX is deceptively simple, looking very similar to Excel formulas. In reality, the learning curve on DAX can be quite painful, because it requires thinking in terms of columns and filters, not in terms of rows.

Session 4: Data Gateways

Data gateways are the way that you bridge the cloud Power BI service to whatever data lives on premises. Installation and configuration is pretty simple overall. Data Gateways allow for schedule refreshes of your data up to the cloud.

One thing that’s worth knowing are the alternative query methods available it gateways. By using DirectQuery or live connections, you can query live data without having to export it all to the cloud.

Related course: Leveraging Timely On-premises Data with Power BI

Session 5: Licensing and deployment

With power BI, generally you are going to be buying pro licenses for all of your users, at $10 per month. However there are other licensing scenarios such as Power BI Reporting server and Power BI Premium. But you are probably going to be going with the pro license.

There are so many was to publish Power BI reports:

- Personal workspaces

- App Workspaces

- Organizational content packs

- Publish to web

- Sharepoint

- Power BI Premium

- Power BI Embedded

- Power BI report server

It can get a bit difficult to keep up with all of the options.

Session 6: Security and Auditing

There are three big pieces to securing Power BI: What data can be access, what reports can be accessed and what can people share. In addition to that, there are interesting features with row-level security built in to Power BI as well as SSAS.

In terms of auditing, much of that is going to be based on the Unified Audit log for Office 365, which requires some work to enable. There are also things you can do with PowerShell and with auditing data gateways.

Overview

Overall I’m pretty proud of the contents. This is the kind of precon I wish I had been able to attend 3 years ago so I had an idea of what I was doing.